A short history of frame rates

The movie industry has embraced 24 frames per second (fps) since the addition of sound in 1926. Other frames rates have been tried since (e.g. The Hobbit), however, almost every movie aimed for theatrical distribution has been created and mastered at 24fps. This is still the case today as even made-for-streaming content is using this cadence.

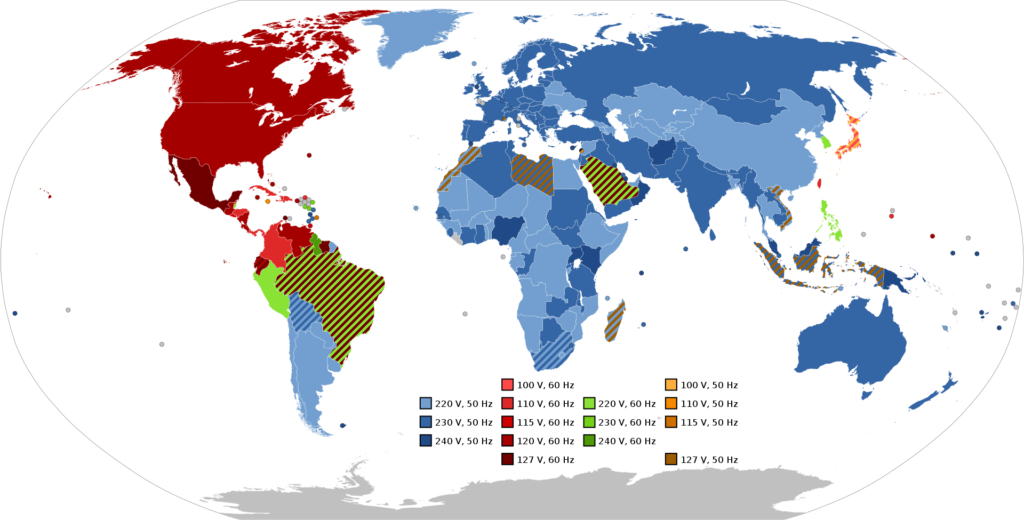

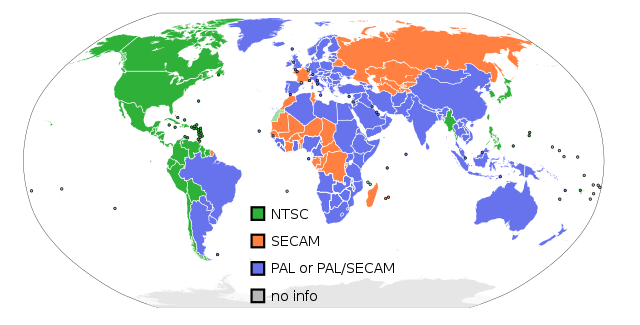

In the pre-color era, when the first televisions were designed for black-and-white image, frame rate was based on the frequency of the electric grid: 60Hz for most of North and Central America, as well as Japan; And 50Hz for the rest of the world.

Since the signal was sent as half images (aka fields), this translated to a frame rate of 30fps for the Americas and japan and 25fps for the rest of the world. To convert a program running at 24fps to 25fps, one needs to speed it up by approximately 4% (24×1.04 = 24.96). To convert 24fps to 30fps, a process called 3:2 pull-down is used.

Fractional frame rates come into play

In 1953 a new NTSC (National Television System Committee) standard was adopted which allowed for broadcasting in color while also being compatible with the then existing stock of black-and-white receivers. The idea was to carry color information via a sub-carrier only the former would recognize. One problem emerged: the bandwidth used by the color sub-carrier could interfere with the audio signal and cause inter-modular beating. In order to avoid this issue, it was decided to reduce the frame rate by 0.1%. The number of fields per second went from 60 to 59.94 which meant modifying the frame rates from 30fps to 29.97fps. This is still in use, but only in the countries still using NTSC standard: USA, Canada, Mexico, Japan, etc

In order to convert the 24fps to 29.97fps, the content has to be first slowed down to 23.976fps, and subsequently converted to 29.97fps using the 3:2 pull down process mentioned above. The problem is a lot of recording devices (e.g. cameras), especially consumer and semi-professional ones, claim to run at 24fps while they actually use a rate of 23.976fps to be compatible with NTSC. It is often the case in editorial to receive 23.976fps footage believed to be recorded at 24fps, which then has to be converted (including the audio) to fit in the timeline.

Here is a link to a great video on the history of frame rates:

https://www.youtube.com/watch?v=mjYjFEp9Yx0

But how does one keep things in sync with fractional frame rates?

We can’t slow down time unfortunately, so the timecode has to be adjusted to account for the 0.1% slow down. One hour of program at 30fps equals to 108,000 frames while the same program running at 29.97fps generates 107,892 frames. That is a difference of 108 frames or 3.6 seconds. To avoid this error, timecode systems are designed so that, on average, once every 1000 frames, a frame number is dropped or omitted from the counting sequence. This is called the ‘drop-frame timecode’.

But, of course, not everyone wants or needs to drop a frame count. Therefore, another system called the ‘non-drop-frame timecode’ came about. These ‘drop-frame’ and ‘non-drop-frame’ systems add complexity and confusion to workflows thanks to fractional frame rates.

https://www.connect.ecuad.ca/~mrose/pdf_documents/timecode.pdf

https://web.archive.org/web/20040819005217/http://www.poynton.com/notes/video/Timecode/

Fractional frame rate calculation

It is important to note 59.94fps, 29.97fps, and 23.976fps are approximations:

60x(1000/1001) = 59.94005994005994005994005994005994005994005994005994…

30x(1000/1001) = 29.97002997002997002997002997002997002997002997002997…

24x(1000/1001) = 23.97602397602397602397602397602397602397602397602397…

The latter is often referred as both 23.98 and 23.976 (sometimes even as just 23), but none of them reflect the exact number. Worse, some editing programs run at 23.98fps while others at 23.976fps creating even more issues down the line.